Section 8: Use PDSA Cycles

Is this section for me?

After developing a data collection plan and establishing baseline data, we recommend organizations continue onto this section for guidance on conducting Plan-Study-Do-Act (PDSA) Cycles. Before beginning this section, let’s see if you are ready to begin PDSA Cycles.

- Have a clearly defined implementation plan?

- Have a small change you want to try?

- Have a clear aim for what you are trying to achieve?

- Know how you will tell if the change is making a difference (measures or indicators)?

- Have a general sense of how you will capture what you learn?

If so, you are ready to begin the work outlined in this section. If not, you may want to revisit earlier sections to clarify your aim, measures, or plan before moving forward.

Introduction to PDSA Cycles

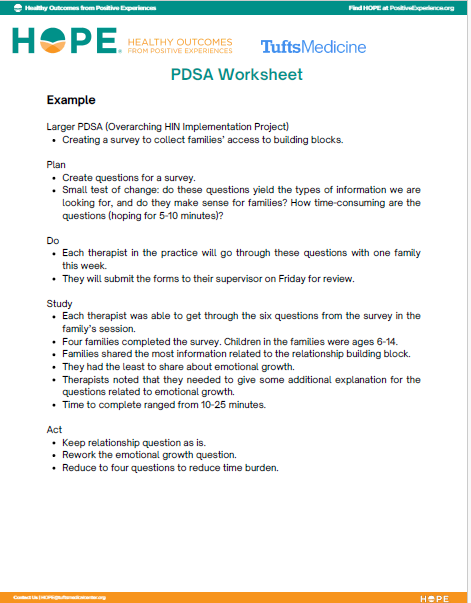

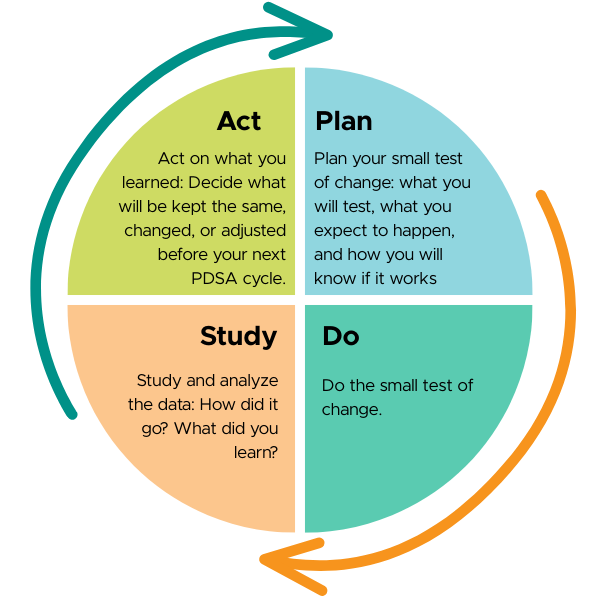

PDSA stands for Plan – Do – Study – Act. It is a simple way to test one small change at a time:

- Plan what you will test and what you expect to happen and how you will know it worked

- Do the test on a small scale

- Study what you learned

- Act based on what you learned

PDSA cycles help teams:

- Learn quickly by testing changes on a small scale before committing to large changes

- See how ideas work in real settings

- Adjust based on what happens

- Build confidence through testing, not guessing

- Decide what to do next

PDSA cycles are about learning, not getting it right the first time, and they work best when they focus on one action step, not an entire project.

How this step fits into the larger QI process

PDSA cycles are not a single step to complete and move past. They are a way of working that can be used once a project is underway and throughout implementation and data collection.

Teams often begin using PDSA cycles after initial planning is complete and baseline data has been established. From there, PDSA cycles can be used repeatedly to:

- Test small adjustments to implementation

- Respond to what the data is showing

- Explore questions that arise during the work

- Refine processes over time

Because implementation of the HOPE framework is adaptive and context-specific, there is no fixed endpoint for PDSA cycles. Teams may return to them whenever they want to learn something new, address a challenge, or strengthen an approach.

Guidance and questions to ask

Start with one action step from your implementation plan and focus on testing it at a manageable scale. Ask:

- What is one small thing we can try?

- Where can we test this?

- Who needs to be involved for this test?

- How will we know if it worked?

Smaller tests make learning easier to see and act on. When a test is limited in scope, it becomes clearer what worked, what didn’t, and why.

- If a test requires multiple approvals, extensive training, or system-wide rollout, it is likely too large for a first PDSA.

- A test can be very small – one person, one interaction, or one day is often enough to learn something useful.

- If a test feels easy to try and easy to undo, it is probably the right size.

Examples of small tests might include:

- Trying a new intake question with a few families

- Testing a revised form with one staff member for one week

- Using a HOPE-aligned script in a few conversations

- Reviewing data at one team meeting instead of changing a full workflow

- Piloting a change in one classroom, unit, or shift

Starting small reduces risk, supports learning, and builds confidence to continue testing and refining over time.

Be sure that your test is focused. Before you start, discuss:

- What you expect to happen

- What data you will look at

- How you will determine if what you tried “worked” or not

Naming this in advance makes it easier to trust what you learn from the test, even when results are mixed or unexpected.

Carry out the change as planned. As you do, pay attention not just to whether the step was completed, but to how it unfolded in real work:

- What happened?

- What was harder or easier than expected?

- Where did the process feel smooth, awkward, or surprising?

- How did staff or families respond or engage?

- What questions or reactions emerged along the way?

These observations help you make sense of the data later and often point to what should be adjusted next.

This step is about making sense of both the data and the experience of the change. After the test, take time to look at what you learned together:

- What did we notice while the test was happening?

- What surprised us or didn’t go as expected?

- What patterns or signals are showing up?

- How did staff or families experience the change?

- What helped this work, and what got in the way?

Learning includes both data and experience. Looking at them together helps teams understand not just whether a change worked, but how and why it did or didn’t.

Use what you learned from the test to decide what comes next. Consider:

- What parts should we keep as they are?

- What parts should be adjusted based on what we learned?

- What is the next small test we want to try?

Your decision does not need to be final. Acting may mean refining the same idea, trying it in a slightly different way, or pausing to address a barrier before moving forward.

As you act, you may update your implementation plan to reflect what you’ve learned. This helps the plan stay realistic, responsive, and aligned with how the work is actually unfolding.

Tools and templates

Here are a few additional, optional, tools that might help as you work through the Guidance and Questions to Ask.

Reflection prompts

- How might sharing this learning strengthen trust, relationships, or connection with others?

- Who could feel more seen, supported, or respected if we shared this thoughtfully?

- What context do we need to include so this learning is understood as part of growth, not judgment?

- How does what we are learning connect to our purpose or values, not just our outcomes?

- What kind of sharing would support hope, clarity, or engagement at this stage of the work?

In practice: Using and sharing learning

By this point, Riverside Middle School had completed several small tests (PDSAs), collected early outcome data and staff feedback, and made targeted adjustments to their approach based on what they were learning.

The team shared learning in simple, regular ways. During standing monthly staff meetings, they used one or two slides to summarize what had been tested, what the data and observations suggested, and what they planned to try next. These updates focused on learning and decision-making rather than reporting results.

At key points during the project (i.e., planning, launch, halfway through the project), school leadership shared brief written summaries with district partners. These updates highlighted the aim, early signals of change, and questions still being explored, clearly framing the work as an ongoing learning experience.

The team also planned a more formal share-out at the end of the school year, using their post-project data to reflect on overall impact, identify lessons learned, and inform decisions about whether and how to continue or expand the work in the following year.

Keep going!

Implementation, testing, and data collection happen together. As you learn, it’s important to pause, reflect on, and share what you’re noticing.

You’re ready to move on if:

- You have begun testing small changes

- You are using data and reflection to guide adjustments

- Your team is learning from what is working and what is not